Ask any LLM to analyze a stock. You'll get a report that reads beautifully and falls apart the moment you check a single number.

Stock Analyzer was built to fix that. Seven specialized modules, running in sequence, each one seeing the output of the last. A short-seller tears apart the bull case before anyone gets to set a price target. Python does the math so the LLM never has to. And the system remembers what it said three months ago.

I. The problem: why LLM stock analysis doesn't work

A single LLM call produces a single narrative. The model optimizes for coherence, not correctness. A story that holds together perfectly can still be completely wrong.

| Failure mode | What actually happens |

|---|---|

| Hallucinated financials | Revenue and growth figures look plausible but are quietly wrong. No way to tell without checking each one manually. |

| Confirmation cascade | Business model → risks → valuation. Each section confirms the one before it. One bad assumption echoes through the entire report unchallenged. |

| Black-box DCF | Discount rates and terminal growth appear with zero provenance. The arithmetic can be off by 40%. |

| Decorative risk section | Risks read like they were copied from the company's own annual report. Technically accurate, functionally useless for investment decisions. |

II. The solution: a 7-module adversarial pipeline

Seven modules, DAG-ordered by dependency. Each one has a single job, real financial data as input, and the accumulated output of every module before it.

| Module | Role | Why it matters |

|---|---|---|

| Classifier | Industry tagging | Auto-loads sector-specific prompts (pharma pipeline risk, manufacturing capacity, consumer brand strength) |

| Business Model | Core economics | Revenue drivers, cost structure, moat — anchored to real financial statements via MCP |

| Risk Assessment | Red-flag detection | Risk matrix with valuation discount factors. Catches what the company's own filings won't tell you |

| Outlook | Forward scenarios | The only module with web search. All results fact-checked before flowing downstream |

| Bull/Bear Debate | Adversarial stress-test | Short-seller attacks → bull defends → judge rules |

| Valuation | LLM + Python hybrid | LLM sets assumptions, Python does the arithmetic. Multi-method cross-validation |

| Synthesis | Investment decision | Buy / hold / sell with target price, stop-loss, and explicit expiration conditions |

Four design choices make this pipeline different from chaining prompts together.

The bear goes first

After three modules build up the bull case, the system tries to destroy it.

| Step | Role | Mandate |

|---|---|---|

| 1. Bear | Short-seller research director | Find the weakest assumptions. No balance, no fairness. Attack with data. |

| 2. Bull | Long-side defense | Read the attack. Defend point by point with counter-evidence from the same data. |

| 3. Judge | Neutral arbiter | Issue a verdict on each contested point. Explicit reasoning required. |

Why bear first? By the time a business model and risk section have been written, confirmation bias has already set in. Each module hardens the bull case. The bear breaks it open before the narrative solidifies.

The bear also hunts for echo chambers: when all three prior modules repeat the same unverified assumption as established fact. Three-way agreement might mean robustness. It might also mean one bad assumption echoed through the pipeline unchecked.

The first three modules built a classic contrarian narrative: rock-bottom asset leverage (36% debt ratio), counter-cyclical fleet expansion, dual catalysts from geopolitical disruption and a new free trade zone. It looked like a textbook bottom-fishing opportunity.

The bear tore it apart. It ran an "echo chamber" audit and found all three modules assumed the company's Hainan subsidiary would generate windfall profits from a new trade policy — but the subsidiary already had 6 vessels in operation during Q3 2025, and profits had collapsed 30% that quarter. The catalyst was already priced in and failing.

The bear's core attack: RMB 685M in construction-in-progress was about to convert to fixed assets when 5 new ships launched in 2026. The resulting depreciation surge, hitting a company whose gross margin had already dropped from 36% to 28%, would mathematically destroy earnings growth. The bear assigned a 90% probability of failure to the bull's +12% profit growth forecast.

The judge ruled: bear wins on earnings (depreciation bomb is real), bull wins on solvency (no bankruptcy risk), bull wins on policy timing (bear used Q3 data to disprove a Q1 2026 catalyst — a logical error). Final verdict: deadlock, but the target price was cut from the initial bull estimate by over 35%.

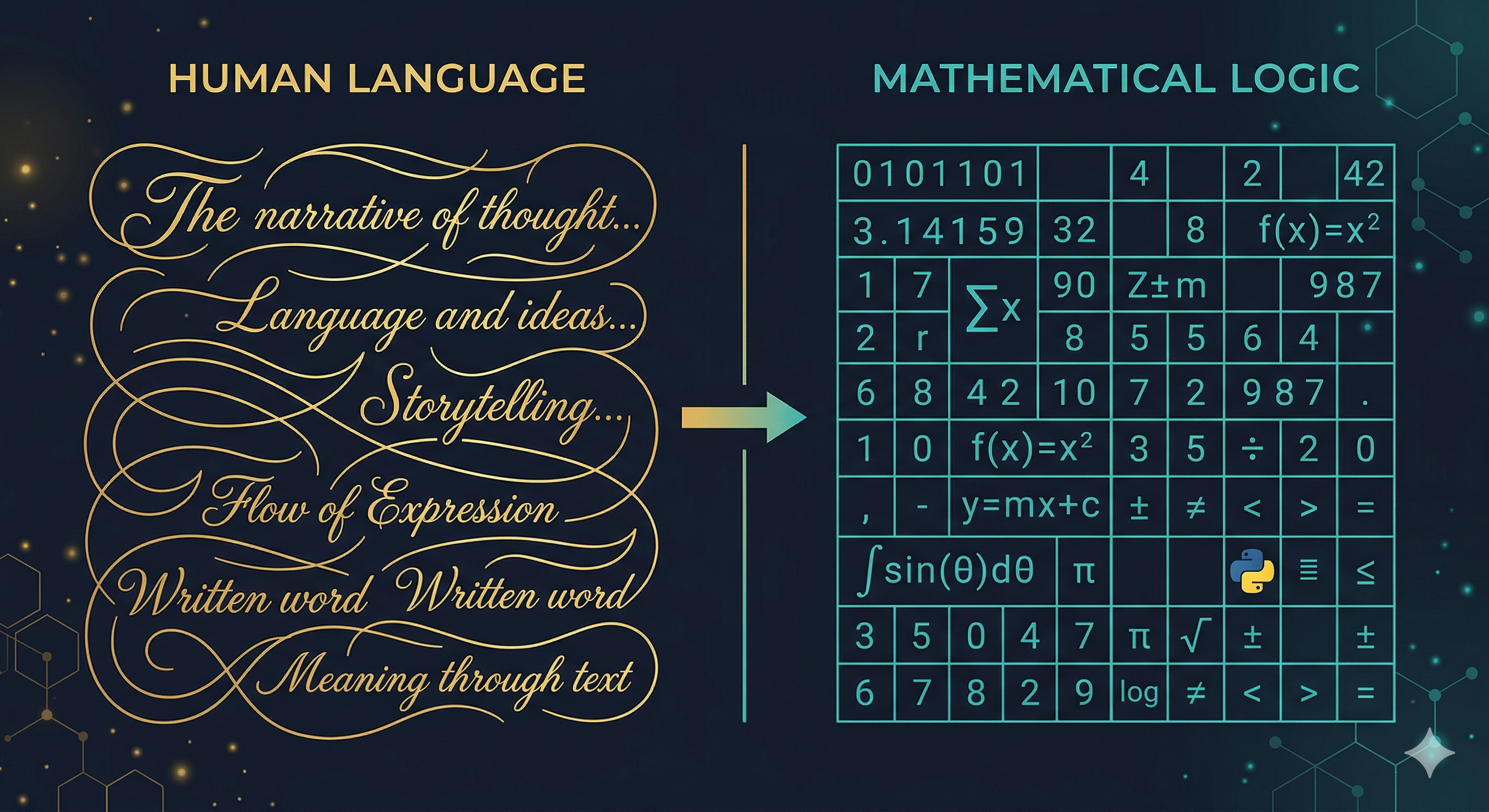

LLM judges, Python calculates

Early versions let the LLM compute DCF directly. The math looked clean. It was sometimes off by 40%. Now, judgment and arithmetic are completely separated:

LLM → assumptions.json → Python → calculations.json → LLM → report

(judgment) (math) (narrative)- Sanity checks — bull target above bear? Margins physically possible? EPS consistent across methods?

- Auto-retry — failed checks trigger assumption regeneration, up to 3 rounds

- Method selection — the LLM picks which methods apply (PE, PB, EV/EBITDA, DCF, dividend yield); Python computes all of them

- Full auditability — every intermediate value saved as JSON, traceable to inputs

Search only when it matters, then fact-check everything

Most AI analysis tools give the LLM unrestricted web access. Stock Analyzer does the opposite: six of seven modules have zero search capability. They work exclusively from structured financial data delivered via MCP protocol.

Why restrict search? Giving an LLM search access during business model or risk analysis makes the output worse. The model pulls in secondhand commentary instead of doing the harder work of reasoning about primary financial data. Search is only useful for forward-looking information that doesn't exist in financial statements.

Only the Outlook module gets search. And everything it finds goes through a three-tier fact-check gate before reaching downstream modules:

| Priority | Criteria | Action |

|---|---|---|

| Must-verify | Claim affects core investment thesis | Human review required before proceeding |

| Should-verify | Supporting argument with specific numbers | Flagged for review, analysis continues |

| Low priority | Background context | Passed through with lower confidence tag |

The system extracts every factual claim containing a specific number, categorizes it, and generates fact_check.yaml for human review. Downstream modules see the verification status of each fact and are instructed to never build a core argument on unverified data.

The Outlook module's web search pulled in 12 factual claims — from fleet capacity data attributed to the Ministry of Transport, to a specific port throughput figure ("Yangpu port cargo +43.2% in Q1 2026"). The system auto-classified 4 as must-verify (fleet expansion plans, industry capacity figures that directly affected the valuation), 5 as should-verify, and 3 as low priority. The resulting fact_check.yaml gave the analyst a focused checklist of exactly which numbers to confirm before trusting the report's forward-looking conclusions.

Persistent memory and self-monitoring

Most AI tools are stateless. Stock Analyzer maintains a persistent trace per company — and knows exactly what would change its mind.

After each analysis, the system generates a watchlist: 4-8 conditions, each one specific and testable.

| Watchlist example | Trigger | Thesis impact |

|---|---|---|

| FY2025 earnings | Net profit YoY decline > 20%, or gross margin < 55% | Downgrade earnings forecast, reassess valuation |

| Titanium project milestone | Delay > 6 months, or trial yield below industry avg | Second growth curve collapses, cut long-term target |

A weekly scan checks each item against live news. Triggered items fire an incremental update — every module re-runs with its prior output plus a delta summary. The question is always: does the previous conclusion still hold?

Over months, a layered history builds up. Round 1 sets the baseline. Round 2 updates after earnings. Round 3 responds to a policy shift. Each round records what changed and why. The system's conviction evolves with evidence, not from scratch.

III. What comes out

One run, one company, ~$1, ~10 minutes. The output is a PDF research report with a complete audit trail:

| Section | Content |

|---|---|

| Industry classification | Auto-detected sector with specialized analysis prompts loaded (pharma, manufacturing, consumer, etc.) |

| Business model | Revenue drivers, cost structure, competitive moat — every claim anchored to financial data |

| Risk assessment | Risk matrix with red-flag detection, valuation discount factors |

| Forward outlook | Bull / base / bear scenarios with search-grounded catalysts, fact-checked |

| Bull/Bear debate | Full transcript: bear attack → bull defense → judge verdict on each contested point |

| Valuation | Multi-method (DCF, PE, PB, EV/EBITDA), Python-computed, with sensitivity analysis |

| Investment decision | Buy / hold / sell, target price, stop-loss, position sizing by investor type, explicit expiration conditions |

Every report ships with a _debug/ folder containing the full prompt sent to each model. Any conclusion can be traced back to its exact inputs. The system also generates a fact_check.yaml for human verification and a watchlist.json for automated monitoring.

One person. Seven modules. A research report that used to require an entire team — generated in 10 minutes for $1. And the system will tell you exactly when it stops believing its own conclusions.